Difference between revisions of "Table Segmentation in Content Manager OnDemand"

(Added explanation for how tweaking segment size works.) |

m (Minor corrections to IBM CMOD Table Segmentation.) |

||

| (2 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

== Introduction == | == Introduction == | ||

In order to keep database queries fast, | In order to keep database queries fast, CMOD uses a concept called "database table segmentation". The term 'table segmentation' refers to splitting extremely large tables into 'segments' of smaller tables for performance and/or ease of maintenance. Although the latest versions of database engines can do this natively, at the time Content Manager OnDemand was created, there was no built-in support for this functionality, so it still uses the old style segmentation (based on date fields) to achieve the scalability and speed that customers require. | ||

When an end user performs a search, CMOD | When an end user performs a search, IBM CMOD queries one or more tables, using the date fields in the submitted search to determine which individual tables to look through to satisfy the request. The date field used to organize the tables is called the 'segment date'. | ||

Before DB2 supported its own table segmentation natively, the Content Manger OnDemand developers decided to split index data into tables of 10 million rows each. Using this method keeps search performance linear, as only the tables containing documents in the date range you're looking for ( for example, 3 months, or 1 year) are actually searched. | Before DB2 supported its own table segmentation natively, the Content Manger OnDemand developers decided to split index data into tables of 10 million rows each. Using this method keeps search performance linear, as only the tables containing documents in the date range you're looking for ( for example, 3 months, or 1 year) are actually searched. | ||

In order to complete queries as quickly as possible, it's important that you minimize the number of tables that are searched. Each additional table is more work for the CPU and Input/Output | In order to complete queries as quickly as possible, it's important that you minimize the number of tables that are searched. Each additional table searched is more work for the CPU and more data transfer from disks (Input/Output, or "I/O") that must be performed -- and delaying the response to the end user. | ||

== Optimizing Segment Size == | == Optimizing Segment Size == | ||

One way to improve query performance is to match the volume of data you ingest each month with | One way to improve query performance is to match the volume of data you ingest each month with an Application Group's segment size. | ||

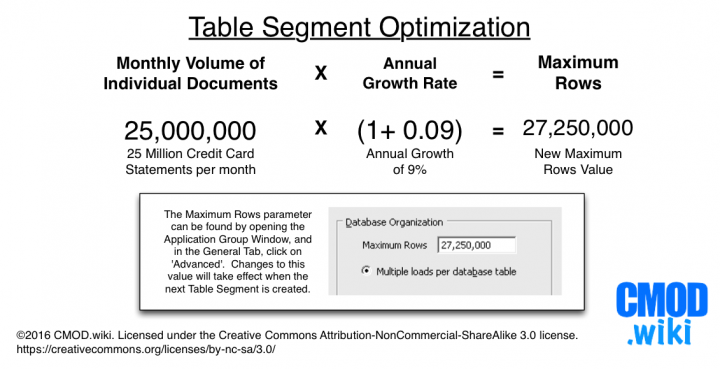

If your monthly volume is more than 10 million documents for a single Application Group, use this formula to estimate your optimal segment size: | If your monthly volume is more than 10 million documents for a single Application Group, use this formula to estimate your optimal segment size: | ||

| Line 17: | Line 17: | ||

Number of Individual Documents loaded per month * 1.10 = Max Rows per database table | Number of Individual Documents loaded per month * 1.10 = Max Rows per database table | ||

The 1.10 in the | The 1.10 in the formula gives you 10% additional room for growth. If your growth rate is higher, adjust it accordingly. If your volumes increase suddenly, you should review the setting. It will take effect for the next database table that is created. | ||

[[File:TableSegmentOptimization.png|720px]] | [[File:TableSegmentOptimization.png|720px]] | ||

| Line 23: | Line 23: | ||

=== How does optimizing segment size work? === | === How does optimizing segment size work? === | ||

Let's say you're a large Content Manager OnDemand | Let's say you're a large IBM Content Manager OnDemand user. You have 40 million customers, and about 25 million receive an invoice in any given month. When you load those invoices into IBM CMOD with a default 'Max Rows' value of 10 million, you create three new tables every month. But wait -- you've been in business for 5 years already, so it's not unreasonable that you might have more than 100 tables full of customer invoice data! | ||

If an employee searches for a customer's invoices over a range of the last 12 months by default, the database must search 36 individual tables to satisfy a single request. Of those 36 tables that are searched, 24 of those tables won't return anything at all! When you multiply the work required to satisfy ONE request across an entire company and all of its customers, the default 'Max Rows' parameter can cause an OnDemand server to quickly become overloaded. | |||

In the same situation, when Content Manager OnDemand is configured with an optimized segment size, one search hits 12 tables, and each table returns a result -- a much better work-to-result ratio, which requires less disk-related I/O and less CPU time. It's better in almost every way. | In the same situation, when Content Manager OnDemand is configured with an optimized segment size, one search hits 12 tables, and each table returns a result -- a much better work-to-result ratio, which requires less disk-related I/O and less CPU time. It's better in almost every way. | ||

Latest revision as of 18:18, 25 September 2020

Introduction

In order to keep database queries fast, CMOD uses a concept called "database table segmentation". The term 'table segmentation' refers to splitting extremely large tables into 'segments' of smaller tables for performance and/or ease of maintenance. Although the latest versions of database engines can do this natively, at the time Content Manager OnDemand was created, there was no built-in support for this functionality, so it still uses the old style segmentation (based on date fields) to achieve the scalability and speed that customers require.

When an end user performs a search, IBM CMOD queries one or more tables, using the date fields in the submitted search to determine which individual tables to look through to satisfy the request. The date field used to organize the tables is called the 'segment date'.

Before DB2 supported its own table segmentation natively, the Content Manger OnDemand developers decided to split index data into tables of 10 million rows each. Using this method keeps search performance linear, as only the tables containing documents in the date range you're looking for ( for example, 3 months, or 1 year) are actually searched.

In order to complete queries as quickly as possible, it's important that you minimize the number of tables that are searched. Each additional table searched is more work for the CPU and more data transfer from disks (Input/Output, or "I/O") that must be performed -- and delaying the response to the end user.

Optimizing Segment Size

One way to improve query performance is to match the volume of data you ingest each month with an Application Group's segment size.

If your monthly volume is more than 10 million documents for a single Application Group, use this formula to estimate your optimal segment size:

Number of Individual Documents loaded per month * 1.10 = Max Rows per database table

The 1.10 in the formula gives you 10% additional room for growth. If your growth rate is higher, adjust it accordingly. If your volumes increase suddenly, you should review the setting. It will take effect for the next database table that is created.

How does optimizing segment size work?

Let's say you're a large IBM Content Manager OnDemand user. You have 40 million customers, and about 25 million receive an invoice in any given month. When you load those invoices into IBM CMOD with a default 'Max Rows' value of 10 million, you create three new tables every month. But wait -- you've been in business for 5 years already, so it's not unreasonable that you might have more than 100 tables full of customer invoice data!

If an employee searches for a customer's invoices over a range of the last 12 months by default, the database must search 36 individual tables to satisfy a single request. Of those 36 tables that are searched, 24 of those tables won't return anything at all! When you multiply the work required to satisfy ONE request across an entire company and all of its customers, the default 'Max Rows' parameter can cause an OnDemand server to quickly become overloaded.

In the same situation, when Content Manager OnDemand is configured with an optimized segment size, one search hits 12 tables, and each table returns a result -- a much better work-to-result ratio, which requires less disk-related I/O and less CPU time. It's better in almost every way.